HPX documentation#

Welcome to the HPX documentation!

If you’re new to HPX you can get started with the Quick start guide. Don’t forget to read the Terminology section to learn about the most important concepts in HPX. The Examples give you a feel for how it is to write real HPX applications and the Manual contains detailed information about everything from building HPX to debugging it. There are links to blog posts and videos about HPX in Additional material.

You can find a comprehensive list of contact options on Support for deploying and using HPX. Do not hesitate to contact us if you can’t find what you are looking for in the documentation!

See Citing HPX for details on how to cite HPX in publications. See HPX users for a list of institutions and projects using HPX.

There are also available a PDF version of this documentation as well as a Single HTML Page.

What is HPX?#

HPX is a C++ Standard Library for Concurrency and Parallelism. It implements all of the corresponding facilities as defined by the C++ Standard. Additionally, in HPX we implement functionalities proposed as part of the ongoing C++ standardization process. We also extend the C++ Standard APIs to the distributed case. HPX is developed by the STE||AR group (see People).

The goal of HPX is to create a high quality, freely available, open source implementation of a new programming model for conventional systems, such as classic Linux based Beowulf clusters or multi-socket highly parallel SMP nodes. At the same time, we want to have a very modular and well designed runtime system architecture which would allow us to port our implementation onto new computer system architectures. We want to use real-world applications to drive the development of the runtime system, coining out required functionalities and converging onto a stable API which will provide a smooth migration path for developers.

The API exposed by HPX is not only modeled after the interfaces defined by the C++11/14/17/20 ISO standard. It also adheres to the programming guidelines used by the Boost collection of C++ libraries. We aim to improve the scalability of today’s applications and to expose new levels of parallelism which are necessary to take advantage of the exascale systems of the future.

HPX is a member of the High-Performance Software Foundation (HPSF) network, which signals its commitment to the open-source, high-performance computing (HPC) community. By joining HPSF - and, concurrently, the Linux Foundation - HPX gains access to a broader collaborative ecosystem that promotes shared standards, interoperable tools, and joint research initiatives, all of which accelerates the development of scalable parallel applications. This dual affiliation enhances HPX’s credibility, attracting contributors and industry partners who value the rigorous governance and transparent development practices upheld by both organizations. Consequently, HPX can more effectively influence the direction of future HPC frameworks while benefiting from the collective expertise, resources, and industry backing provided by HPSF and the Linux Foundation.

What’s so special about HPX?#

HPX exposes a uniform, standards-oriented API for ease of programming parallel and distributed applications.

It enables programmers to write fully asynchronous code using hundreds of millions of threads.

HPX provides unified syntax and semantics for local and remote operations.

HPX makes concurrency manageable with dataflow and future based synchronization.

It implements a rich set of runtime services supporting a broad range of use cases.

HPX exposes a uniform, flexible, and extendable performance counter framework which can enable runtime adaptivity

It is designed to solve problems conventionally considered to be scaling-impaired.

HPX has been designed and developed for systems of any scale, from hand-held devices to very large scale systems.

It is the first fully functional implementation of the ParalleX execution model.

HPX is published under a liberal open-source license and has an open, active, and thriving developer community.

Quick start#

The following steps will help you get started with HPX. Before getting started, make sure you have all the necessary prerequisites, which are listed in _prerequisites. After Installing HPX, you can check how to run a simple example Hello, World!. Writing task-based applications explains how you can get started with HPX. You can refer to our Migration guide if you use other APIs for parallelism (like OpenMP, MPI or Intel Threading Building Blocks (TBB)) and you would like to convert your code to HPX code.

Installing HPX#

The easiest way to install HPX on your system is by choosing one of the steps below:

vcpkg

You can download and install HPX using the vcpkg dependency manager:

$ vcpkg install hpxSpack

Another way to install HPX is using Spack:

$ spack install hpxFedora

Installation can be done with Fedora as well:

$ dnf install hpx*Arch Linux

HPX is available in the Arch User Repository (AUR) as

hpxtoo.

More information or alternatives regarding the installation can be found in the Building HPX, a detailed guide with thorough explanation of ways to build and use HPX.

Hello, World!#

To get started with this minimal example you need to create a new project

directory and a file CMakeLists.txt with the contents below in order to

build an executable using CMake and HPX:

cmake_minimum_required(VERSION 3.19)

project(my_hpx_project CXX)

find_package(HPX REQUIRED)

add_executable(my_hpx_program main.cpp)

target_link_libraries(my_hpx_program HPX::hpx HPX::wrap_main hpx::iostreams_component)

The next step is to create a main.cpp with the contents below:

// Including 'hpx/hpx_main.hpp' instead of the usual 'hpx/hpx_init.hpp' enables

// to use the plain C-main below as the direct main HPX entry point.

#include <hpx/hpx_main.hpp>

#include <hpx/iostream.hpp>

int main()

{

// Say hello to the world!

hpx::cout << "Hello World!\n" << std::flush;

return 0;

}

Then, in your project directory run the following:

$ mkdir build && cd build

$ cmake -DHPX_DIR=</path/to/hpx/installation> ..

$ make all

$ ./my_hpx_program

$ ./my_hpx_program

Hello World!

The program looks almost like a regular C++ hello world with the exception of

the two includes and hpx::cout.

When you include

hpx_main.hppHPX makes sure thatmainactually gets launched on the HPX runtime. So while it looks almost the same you can now use futures,async, parallel algorithms and more which make use of the HPX runtime with lightweight threads.hpx::coutis a replacement forstd::coutto make sure printing never blocks a lightweight thread. You can read more abouthpx::coutin The HPX I/O-streams component.

Note

You will most likely have more than one

main.cppfile in your project. See the section on Using HPX with CMake-based projects for more details on how to useadd_hpx_executable.HPX::wrap_mainis required if you are implicitly usingmain()as the runtime entry point. See Re-use the main() function as the main HPX entry point for more information.hpx::iostreams_componentis optional for a minimal project but lets us use the HPX equivalent ofstd::cout, i.e., the HPX The HPX I/O-streams component functionality in our application.You do not have to let HPX take over your main function like in the example. See Starting the HPX runtime for more details on how to initialize and run the HPX runtime.

Caution

Ensure that HPX is installed with HPX_WITH_DISTRIBUTED_RUNTIME=ON to

prevent encountering an error indicating that the HPX::iostreams_component

target is not found.

When including hpx_main.hpp the user-defined main gets renamed and

the real main function is defined by HPX. This means that the

user-defined main must include a return statement, unlike the real

main. If you do not include the return statement, you may end up with

confusing compile time errors mentioning user_main or even runtime

errors.

Writing task-based applications#

So far we haven’t done anything that can’t be done using the C++ standard library. In this section we will give a short overview of what you can do with HPX on a single node. The essence is to avoid global synchronization and break up your application into small, composable tasks whose dependencies control the flow of your application. Remember, however, that HPX allows you to write distributed applications similarly to how you would write applications for a single node (see Why HPX? and Writing distributed applications).

If you are already familiar with async and future from the C++ standard

library, the same functionality is available in HPX.

The following terminology is essential when talking about task-based C++ programs:

lightweight thread: Essential for good performance with task-based programs. Lightweight refers to smaller stacks and faster context switching compared to OS threads. Smaller overheads allow the program to be broken up into smaller tasks, which in turns helps the runtime fully utilize all processing units.

async: The most basic way of launching tasks asynchronously. Returns afuture<T>.future<T>: Represents a value of typeTthat will be ready in the future. The value can be retrieved withget(blocking) and one can check if the value is ready withis_ready(non-blocking).shared_future<T>: Same asfuture<T>but can be copied (similar tostd::unique_ptrvsstd::shared_ptr).continuation: A function that is to be run after a previous task has run (represented by a future).

thenis a method offuture<T>that takes a function to run next. Used to build up dataflow DAGs (directed acyclic graphs).shared_futures help you split up nodes in the DAG and functions likewhen_allhelp you join nodes in the DAG.

The following example is a collection of the most commonly used functionality in HPX:

#include <hpx/algorithm.hpp>

#include <hpx/future.hpp>

#include <hpx/init.hpp>

#include <iostream>

#include <random>

#include <vector>

void final_task(hpx::future<hpx::tuple<hpx::future<double>, hpx::future<void>>>)

{

std::cout << "in final_task" << std::endl;

}

int hpx_main()

{

// A function can be launched asynchronously. The program will not block

// here until the result is available.

hpx::future<int> f = hpx::async([]() { return 42; });

std::cout << "Just launched a task!" << std::endl;

// Use get to retrieve the value from the future. This will block this task

// until the future is ready, but the HPX runtime will schedule other tasks

// if there are tasks available.

std::cout << "f contains " << f.get() << std::endl;

// Let's launch another task.

hpx::future<double> g = hpx::async([]() { return 3.14; });

// Tasks can be chained using the then method. The continuation takes the

// future as an argument.

hpx::future<double> result = g.then([](hpx::future<double>&& gg) {

// This function will be called once g is ready. gg is g moved

// into the continuation.

return gg.get() * 42.0 * 42.0;

});

// You can check if a future is ready with the is_ready method.

std::cout << "Result is ready? " << result.is_ready() << std::endl;

// You can launch other work in the meantime. Let's sort a vector.

std::vector<int> v(1000000);

// We fill the vector synchronously and sequentially.

hpx::generate(hpx::execution::seq, std::begin(v), std::end(v), &std::rand);

// We can launch the sort in parallel and asynchronously.

hpx::future<void> done_sorting =

hpx::sort(hpx::execution::par( // In parallel.

hpx::execution::task), // Asynchronously.

std::begin(v), std::end(v));

// We launch the final task when the vector has been sorted and result is

// ready using when_all.

auto all = hpx::when_all(result, done_sorting).then(&final_task);

// We can wait for all to be ready.

all.wait();

// all must be ready at this point because we waited for it to be ready.

std::cout << (all.is_ready() ? "all is ready!" : "all is not ready...")

<< std::endl;

return hpx::local::finalize();

}

int main(int argc, char* argv[])

{

return hpx::local::init(hpx_main, argc, argv);

}

Try copying the contents to your main.cpp file and look at the output. It can

be a good idea to go through the program step by step with a debugger. You can

also try changing the types or adding new arguments to functions to make sure

you can get the types to match. The type of the then method can be especially

tricky to get right (the continuation needs to take the future as an argument).

Note

HPX programs accept command line arguments. The most important one is

--hpx:threads=N to set the number of OS threads used by

HPX. HPX uses one thread per core by default. Play around with the

example above and see what difference the number of threads makes on the

sort function. See Launching and configuring HPX applications for more details on

how and what options you can pass to HPX.

Tip

The example above used the construction hpx::when_all(...).then(...). For

convenience and performance it is a good idea to replace uses of

hpx::when_all(...).then(...) with dataflow. See

Dataflow for more details on dataflow.

Tip

If possible, try to use the provided parallel algorithms instead of writing your own implementation. This can save you time and the resulting program is often faster.

Next steps#

If you haven’t done so already, reading the Terminology section will help you get familiar with the terms used in HPX.

The Examples section contains small, self-contained walkthroughs of example HPX programs. The Local to remote example is a thorough, realistic example starting from a single node implementation and going stepwise to a distributed implementation.

The Manual contains detailed information on writing, building and running HPX applications.

Tutorials#

This section provides structured, video-based learning resources for HPX. The tutorials complement the reference documentation with guided, example-driven explanations of core concepts and advanced features.

Each tutorial introduces the relevant concepts and then applies them step by step through practical code examples. The focus is on demonstrating how to design, implement, and reason about parallel and distributed applications using HPX. While the main documentation emphasizes API descriptions and detailed reference material, the tutorial series adopts a practical, code-oriented approach.

The tutorials are intended for a broad audience: from beginners setting up HPX for the first time to advanced users exploring performance tuning, custom executors, and SIMD vectorization. Together, they form a structured learning path that lowers the entry barrier while progressively introducing more advanced features.

The complete video series is available on YouTube:

The code is available on Github:

HPX Tutorial Code <https://github.com/STEllAR-GROUP/HPX_Tutorials_Code>

Available Modules#

- Introduction to |hpx|

Foundational overview of HPX, including its architecture, design principles, and key capabilities in the context of high-performance computing.

- Building |hpx| on Windows/Unix

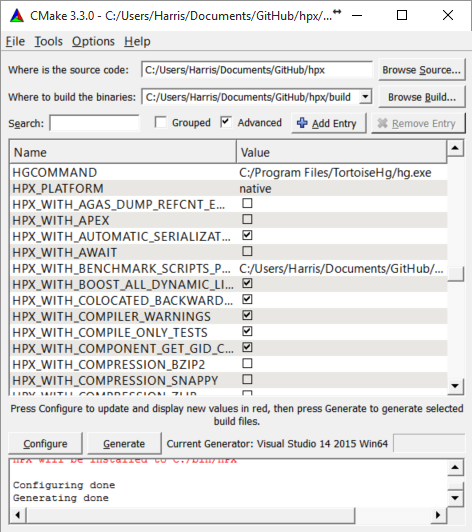

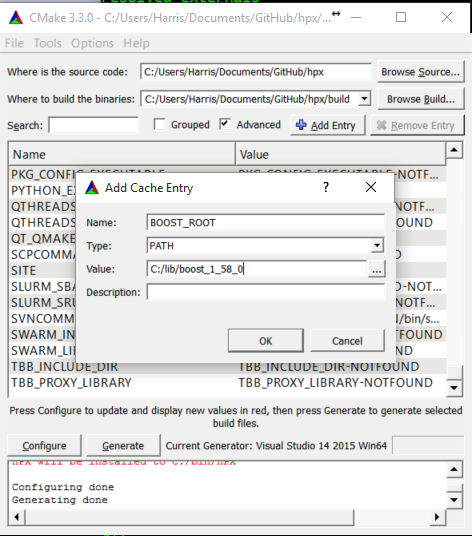

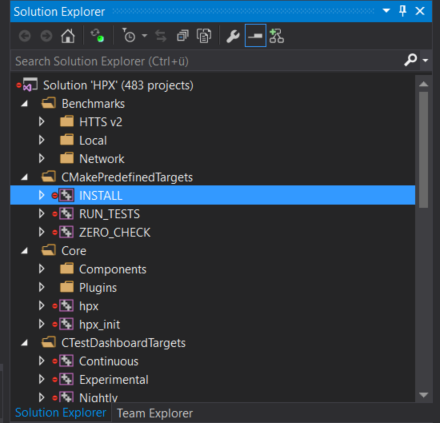

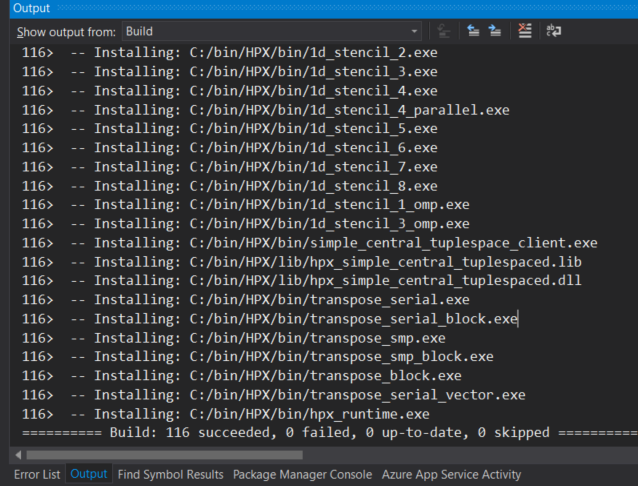

Step-by-step guidance on installing HPX and setting up the environment based on your operating system.

- Running |hpx| applications

Demonstation on how to build and run a simple Hello World example using HPX.

- Algorithms in |hpx|

Overview of the parallel algorithms provided by HPX and explanation on how execution policies unlock high-performance C++ computing.

- Vectorization and SIMD in |hpx|

Introduction to data-parallel programming, SIMD execution policies, and vectorization techniques relevant to modern C++ and HPC workloads.

- Futures and Asynchronous Programming

Explanation of the asynchronous programming model in HPX, including futures, async, and dataflow-based execution patterns.

- Performance Analysis and Optimization

Guidance on profiling HPX applications, analyzing performance counters, identifying bottlenecks, and applying optimization strategies.

- Task Scheduling and Custom Executors

Detailed discussion of the execution model in HPX, built-in executors, and mechanisms for defining custom execution strategies.

- Migration Guides

Best practices and recommendations for transitioning from other HPC frameworks to HPX.

- HPX in Practice

Examples of open-source libraries and applications using HPX, illustrating real-world usage patterns and ecosystem integration.

Examples#

The following sections analyze some examples to help you get familiar with the HPX style of programming. We start off with simple examples that utilize basic HPX elements and then begin to expose the reader to the more complex and powerful HPX concepts. Section Building tests and examples shows how you can build the examples.

Asynchronous execution#

The Fibonacci sequence is a sequence of numbers starting with 0 and 1 where every subsequent number is the sum of the previous two numbers. In this example, we will use HPX to calculate the value of the n-th element of the Fibonacci sequence. In order to compute this problem in parallel, we will use a facility known as a future.

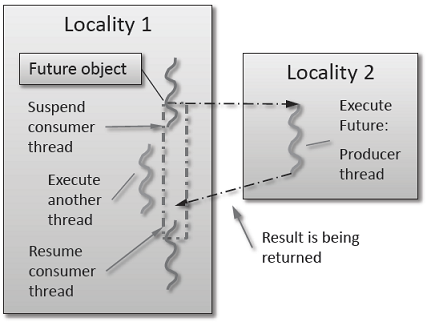

As shown in the Fig. 1 below, a future encapsulates a delayed computation. It acts as a proxy for a result initially not known, most of the time because the computation of the result has not completed yet. The future synchronizes the access of this value by optionally suspending any HPX-threads requesting the result until the value is available. When a future is created, it spawns a new HPX-thread (either remotely with a parcel or locally by placing it into the thread queue) which, when run, will execute the function associated with the future. The arguments of the function are bound when the future is created.

Fig. 1 Schematic of a future execution.#

Once the function has finished executing, a write operation is performed on the future. The write operation marks the future as completed, and optionally stores data returned by the function. When the result of the delayed computation is needed, a read operation is performed on the future. If the future’s function hasn’t completed when a read operation is performed on it, the reader HPX-thread is suspended until the future is ready. The future facility allows HPX to schedule work early in a program so that when the function value is needed it will already be calculated and available. We use this property in our Fibonacci example below to enable its parallel execution.

Setup#

The source code for this example can be found here:

fibonacci_local.cpp.

To compile this program, go to your HPX build directory (see Building HPX for information on configuring and building HPX) and enter:

$ make examples.quickstart.fibonacci_local

To run the program type:

$ ./bin/fibonacci_local

This should print (time should be approximate):

fibonacci(10) == 55

elapsed time: 0.002430 [s]

This run used the default settings, which calculate the tenth element of the

Fibonacci sequence. To declare which Fibonacci value you want to calculate, use

the --n-value option. Additionally you can use the --hpx:threads

option to declare how many OS-threads you wish to use when running the program.

For instance, running:

$ ./bin/fibonacci --n-value 20 --hpx:threads 4

Will yield:

fibonacci(20) == 6765

elapsed time: 0.062854 [s]

Walkthrough#

Now that you have compiled and run the code, let’s look at how the code works.

Since this code is written in C++, we will begin with the main() function.

Here you can see that in HPX, main() is only used to initialize the

runtime system. It is important to note that application-specific command line

options are defined here. HPX uses Boost.Program_options for command line

processing. You can see that our programs --n-value option is set by calling

the add_options() method on an instance of

hpx::program_options::options_description. The default value of the

variable is set to 10. This is why when we ran the program for the first time

without using the --n-value option the program returned the 10th value of

the Fibonacci sequence. The constructor argument of the description is the text

that appears when a user uses the --hpx:help option to see what

command line options are available. HPX_APPLICATION_STRING is a macro that

expands to a string constant containing the name of the HPX application

currently being compiled.

In HPX main() is used to initialize the runtime system and pass the

command line arguments to the program. If you wish to add command line options

to your program you would add them here using the instance of the Boost class

options_description, and invoking the public member function

.add_options() (see Boost Documentation for more details). hpx::init

calls hpx_main() after setting up HPX, which is where the logic of our

program is encoded.

int main(int argc, char* argv[])

{

// Configure application-specific options

hpx::program_options::options_description desc_commandline(

"Usage: " HPX_APPLICATION_STRING " [options]");

// clang-format off

desc_commandline.add_options()

("n-value",

hpx::program_options::value<std::uint64_t>()->default_value(10),

"n value for the Fibonacci function")

;

// clang-format on

// Initialize and run HPX

hpx::local::init_params init_args;

init_args.desc_cmdline = desc_commandline;

return hpx::local::init(hpx_main, argc, argv, init_args);

}

The hpx::init function in main() starts the runtime system, and

invokes hpx_main() as the first HPX-thread. Below we can see that the

basic program is simple. The command line option --n-value is read in, a

timer (hpx::chrono::high_resolution_timer) is set up to record the

time it takes to do the computation, the fibonacci function is invoked

synchronously, and the answer is printed out.

int hpx_main(hpx::program_options::variables_map& vm)

{

hpx::threads::add_scheduler_mode(

hpx::threads::policies::scheduler_mode::fast_idle_mode);

// extract command line argument, i.e. fib(N)

std::uint64_t n = vm["n-value"].as<std::uint64_t>();

{

// Keep track of the time required to execute.

hpx::chrono::high_resolution_timer t;

std::uint64_t r = fibonacci(n);

char const* fmt = "fibonacci({1}) == {2}\nelapsed time: {3} [s]\n";

hpx::util::format_to(std::cout, fmt, n, r, t.elapsed());

}

return hpx::local::finalize(); // Handles HPX shutdown

}

The fibonacci function itself is synchronous as the work done inside is

asynchronous. To understand what is happening we have to look inside the

fibonacci function:

std::uint64_t fibonacci(std::uint64_t n)

{

if (n < 2)

return n;

hpx::future<std::uint64_t> n1 = hpx::async(fibonacci, n - 1);

std::uint64_t n2 = fibonacci(n - 2);

return n1.get() + n2; // wait for the Future to return their values

}

This block of code looks similar to regular C++ code. First, if (n < 2),

meaning n is 0 or 1, then we return 0 or 1 (recall the first element of the

Fibonacci sequence is 0 and the second is 1). If n is larger than 1 we spawn two

new tasks whose results are contained in n1 and n2. This is done using

hpx::async which takes as arguments a function (function pointer,

object or lambda) and the arguments to the function. Instead of returning a

std::uint64_t like fibonacci does, hpx::async returns a future of a

std::uint64_t, i.e. hpx::future<std::uint64_t>. Each of these futures

represents an asynchronous, recursive call to fibonacci. After we’ve created

the futures, we wait for both of them to finish computing, we add them together,

and return that value as our result. We get the values from the futures using

the get method. The recursive call tree will continue until n is equal to 0

or 1, at which point the value can be returned because it is implicitly known.

When this termination condition is reached, the futures can then be added up,

producing the n-th value of the Fibonacci sequence.

Note that calling get potentially blocks the calling HPX-thread, and lets

other HPX-threads run in the meantime. There are, however, more efficient ways

of doing this. examples/quickstart/fibonacci_futures.cpp contains many more

variations of locally computing the Fibonacci numbers, where each method makes

different tradeoffs in where asynchrony and parallelism is applied. To get

started, however, the method above is sufficient and optimizations can be

applied once you are more familiar with HPX. The example

Dataflow presents dataflow, which is a way to more

efficiently chain together multiple tasks.

Parallel algorithms#

This program will perform a matrix multiplication in parallel. The output will look something like this:

Matrix A is :

4 9 6

1 9 8

Matrix B is :

4 9

6 1

9 8

Resultant Matrix is :

124 93

130 82

Setup#

The source code for this example can be found here:

matrix_multiplication.cpp.

To compile this program, go to your HPX build directory (see Building HPX for information on configuring and building HPX) and enter:

$ make examples.quickstart.matrix_multiplication

To run the program type:

$ ./bin/matrix_multiplication

or:

$ ./bin/matrix_multiplication --n 2 --m 3 --k 2 --s 100 --l 0 --u 10

where the first matrix is n x m and the second m x k, s is the seed for creating the random values of the matrices and the range of these values is [l,u]

This should print:

Matrix A is :

4 9 6

1 9 8

Matrix B is :

4 9

6 1

9 8

Resultant Matrix is :

124 93

130 82

Notice that the numbers may be different because of the random initialization of the matrices.

Walkthrough#

Now that you have compiled and run the code, let’s look at how the code works.

First, main() is used to initialize the runtime system and pass the command line arguments to the program.

hpx::init calls hpx_main() after setting up HPX, which is where our program is implemented.

int main(int argc, char* argv[])

{

using namespace hpx::program_options;

options_description cmdline("usage: " HPX_APPLICATION_STRING " [options]");

// clang-format off

cmdline.add_options()

("n",

hpx::program_options::value<std::size_t>()->default_value(2),

"Number of rows of first matrix")

("m",

hpx::program_options::value<std::size_t>()->default_value(3),

"Number of columns of first matrix (equal to the number of rows of "

"second matrix)")

("k",

hpx::program_options::value<std::size_t>()->default_value(2),

"Number of columns of second matrix")

("seed,s",

hpx::program_options::value<unsigned int>(),

"The random number generator seed to use for this run")

("l",

hpx::program_options::value<int>()->default_value(0),

"Lower limit of range of values")

("u",

hpx::program_options::value<int>()->default_value(10),

"Upper limit of range of values");

// clang-format on

hpx::local::init_params init_args;

init_args.desc_cmdline = cmdline;

return hpx::local::init(hpx_main, argc, argv, init_args);

}

Proceeding to the hpx_main() function, we can see that matrix multiplication can be done very easily.

int hpx_main(hpx::program_options::variables_map& vm)

{

using element_type = int;

// Define matrix sizes

std::size_t const rowsA = vm["n"].as<std::size_t>();

std::size_t const colsA = vm["m"].as<std::size_t>();

std::size_t const rowsB = colsA;

std::size_t const colsB = vm["k"].as<std::size_t>();

std::size_t const rowsR = rowsA;

std::size_t const colsR = colsB;

// Initialize matrices A and B

std::vector<int> A(rowsA * colsA);

std::vector<int> B(rowsB * colsB);

std::vector<int> R(rowsR * colsR);

// Define seed

unsigned int seed = std::random_device{}();

if (vm.count("seed"))

seed = vm["seed"].as<unsigned int>();

gen.seed(seed);

std::cout << "using seed: " << seed << std::endl;

// Define range of values

int const lower = vm["l"].as<int>();

int const upper = vm["u"].as<int>();

// Matrices have random values in the range [lower, upper]

std::uniform_int_distribution<element_type> dis(lower, upper);

auto generator = std::bind(dis, gen);

hpx::ranges::generate(A, generator);

hpx::ranges::generate(B, generator);

// Perform matrix multiplication

hpx::experimental::for_loop(hpx::execution::par, 0, rowsA, [&](auto i) {

hpx::experimental::for_loop(0, colsB, [&](auto j) {

R[i * colsR + j] = 0;

hpx::experimental::for_loop(0, rowsB, [&](auto k) {

R[i * colsR + j] += A[i * colsA + k] * B[k * colsB + j];

});

});

});

// Print all 3 matrices

print_matrix(A, rowsA, colsA, "A");

print_matrix(B, rowsB, colsB, "B");

print_matrix(R, rowsR, colsR, "R");

return hpx::local::finalize();

}

First, the dimensions of the matrices are defined. If they were not given as command-line arguments, their default values are 2 x 3 for the first matrix and 3 x 2 for the second. We use standard vectors to define the matrices to be multiplied as well as the resultant matrix.

To give some random initial values to our matrices, we use std::uniform_int_distribution. Then, std::bind() is used

along with hpx::ranges::generate() to yield two matrices A and B, which contain values in the range of [0, 10] or in

the range defined by the user at the command-line arguments. The seed to generate the values can also be defined by the user.

The next step is to perform the matrix multiplication in parallel. This can be done by just using an hpx::experimental::for_loop

combined with a parallel execution policy hpx::execution::par as the outer loop of the multiplication. Note that the execution

of hpx::experimental::for_loop without specifying an execution policy is equivalent to specifying hpx::execution::seq

as the execution policy.

Finally, the matrices A, B that are multiplied as well as the resultant matrix R are printed using the following function.

void print_matrix(std::vector<int> const& M, std::size_t rows, std::size_t cols,

char const* message)

{

std::cout << "\nMatrix " << message << " is:" << std::endl;

for (std::size_t i = 0; i < rows; i++)

{

for (std::size_t j = 0; j < cols; j++)

std::cout << M[i * cols + j] << " ";

std::cout << "\n";

}

}

Asynchronous execution with actions#

This example extends the previous example by

introducing actions: functions that can be run remotely. In this

example, however, we will still only run the action locally. The mechanism to

execute actions stays the same: hpx::async. Later

examples will demonstrate running actions on remote localities

(e.g. Remote execution with actions).

Setup#

The source code for this example can be found here:

fibonacci.cpp.

To compile this program, go to your HPX build directory (see Building HPX for information on configuring and building HPX) and enter:

$ make examples.quickstart.fibonacci

To run the program type:

$ ./bin/fibonacci

This should print (time should be approximate):

fibonacci(10) == 55

elapsed time: 0.00186288 [s]

This run used the default settings, which calculate the tenth element of the

Fibonacci sequence. To declare which Fibonacci value you want to calculate, use

the --n-value option. Additionally you can use the --hpx:threads

option to declare how many OS-threads you wish to use when running the program.

For instance, running:

$ ./bin/fibonacci --n-value 20 --hpx:threads 4

Will yield:

fibonacci(20) == 6765

elapsed time: 0.233827 [s]

Walkthrough#

The code needed to initialize the HPX runtime is the same as in the previous example:

int main(int argc, char* argv[])

{

// Configure application-specific options

hpx::program_options::options_description desc_commandline(

"Usage: " HPX_APPLICATION_STRING " [options]");

desc_commandline.add_options()("n-value",

hpx::program_options::value<std::uint64_t>()->default_value(10),

"n value for the Fibonacci function");

// Initialize and run HPX

hpx::init_params init_args;

init_args.desc_cmdline = desc_commandline;

return hpx::init(argc, argv, init_args);

}

The hpx::init function in main() starts the runtime system, and

invokes hpx_main() as the first HPX-thread. The command line option

--n-value is read in, a timer

(hpx::chrono::high_resolution_timer) is set up to record the time it

takes to do the computation, the fibonacci action is invoked

synchronously, and the answer is printed out.

int hpx_main(hpx::program_options::variables_map& vm)

{

// extract command line argument, i.e. fib(N)

std::uint64_t n = vm["n-value"].as<std::uint64_t>();

{

// Keep track of the time required to execute.

hpx::chrono::high_resolution_timer t;

// Wait for fib() to return the value

fibonacci_action fib;

std::uint64_t r = fib(hpx::find_here(), n);

char const* fmt = "fibonacci({1}) == {2}\nelapsed time: {3} [s]\n";

hpx::util::format_to(std::cout, fmt, n, r, t.elapsed());

}

return hpx::finalize(); // Handles HPX shutdown

}

Upon a closer look we see that we’ve created a std::uint64_t to store the

result of invoking our fibonacci_action fib. This action will

launch synchronously (as the work done inside of the action will be

asynchronous itself) and return the result of the Fibonacci sequence. But wait,

what is an action? And what is this fibonacci_action? For starters,

an action is a wrapper for a function. By wrapping functions, HPX can

send packets of work to different processing units. These vehicles allow users

to calculate work now, later, or on certain nodes. The first argument to our

action is the location where the action should be run. In this

case, we just want to run the action on the machine that we are

currently on, so we use hpx::find_here. To

further understand this we turn to the code to find where fibonacci_action

was defined:

// forward declaration of the Fibonacci function

std::uint64_t fibonacci(std::uint64_t n);

// This is to generate the required boilerplate we need for the remote

// invocation to work.

HPX_PLAIN_ACTION(fibonacci, fibonacci_action)

A plain action is the most basic form of action. Plain

actions wrap simple global functions which are not associated with any

particular object (we will discuss other types of actions in

Components and actions). In this block of code the function fibonacci()

is declared. After the declaration, the function is wrapped in an action

in the declaration HPX_PLAIN_ACTION. This function takes two

arguments: the name of the function that is to be wrapped and the name of the

action that you are creating.

This picture should now start making sense. The function fibonacci() is

wrapped in an action fibonacci_action, which was run synchronously

but created asynchronous work, then returns a std::uint64_t representing the

result of the function fibonacci(). Now, let’s look at the function

fibonacci():

std::uint64_t fibonacci(std::uint64_t n)

{

if (n < 2)

return n;

// We restrict ourselves to execute the Fibonacci function locally.

hpx::id_type const locality_id = hpx::find_here();

// Invoking the Fibonacci algorithm twice is inefficient.

// However, we intentionally demonstrate it this way to create some

// heavy workload.

fibonacci_action fib;

hpx::future<std::uint64_t> n1 = hpx::async(fib, locality_id, n - 1);

hpx::future<std::uint64_t> n2 = hpx::async(fib, locality_id, n - 2);

return n1.get() +

n2.get(); // wait for the Futures to return their values

}

This block of code is much more straightforward and should look familiar from

the previous example. First, if (n < 2),

meaning n is 0 or 1, then we return 0 or 1 (recall the first element of the

Fibonacci sequence is 0 and the second is 1). If n is larger than 1 we spawn two

tasks using hpx::async. Each of these futures represents an

asynchronous, recursive call to fibonacci. As previously we wait for both

futures to finish computing, get the results, add them together, and return that

value as our result. The recursive call tree will continue until n is equal to 0

or 1, at which point the value can be returned because it is implicitly known.

When this termination condition is reached, the futures can then be added up,

producing the n-th value of the Fibonacci sequence.

Remote execution with actions#

This program will print out a hello world message on every OS-thread on every locality. The output will look something like this:

hello world from OS-thread 1 on locality 0

hello world from OS-thread 1 on locality 1

hello world from OS-thread 0 on locality 0

hello world from OS-thread 0 on locality 1

Setup#

The source code for this example can be found here:

hello_world_distributed.cpp.

To compile this program, go to your HPX build directory (see Building HPX for information on configuring and building HPX) and enter:

$ make examples.quickstart.hello_world_distributed

To run the program type:

$ ./bin/hello_world_distributed

This should print:

hello world from OS-thread 0 on locality 0

To use more OS-threads use the command line option --hpx:threads and

type the number of threads that you wish to use. For example, typing:

$ ./bin/hello_world_distributed --hpx:threads 2

will yield:

hello world from OS-thread 1 on locality 0

hello world from OS-thread 0 on locality 0

Notice how the ordering of the two print statements will change with subsequent runs. To run this program on multiple localities please see the section How to use HPX applications with PBS.

Walkthrough#

Now that you have compiled and run the code, let’s look at how the code works,

beginning with main():

// Here is the main entry point. By using the include 'hpx/hpx_main.hpp' HPX

// will invoke the plain old C-main() as its first HPX thread.

int main()

{

// Get a list of all available localities.

std::vector<hpx::id_type> localities = hpx::find_all_localities();

// Reserve storage space for futures, one for each locality.

std::vector<hpx::future<void>> futures;

futures.reserve(localities.size());

for (hpx::id_type const& node : localities)

{

// Asynchronously start a new task. The task is encapsulated in a

// future, which we can query to determine if the task has

// completed.

typedef hello_world_foreman_action action_type;

futures.push_back(hpx::async<action_type>(node));

}

// The non-callback version of hpx::wait_all takes a single parameter,

// a vector of futures to wait on. hpx::wait_all only returns when

// all of the futures have finished.

hpx::wait_all(futures);

return 0;

}

In this excerpt of the code we again see the use of futures. This time the

futures are stored in a vector so that they can easily be accessed.

hpx::wait_all is a family of functions that wait on for an

std::vector<> of futures to become ready. In this piece of code, we are

using the synchronous version of hpx::wait_all, which takes one

argument (the std::vector<> of futures to wait on). This function will not

return until all the futures in the vector have been executed.

In Asynchronous execution with actions we used hpx::find_here to specify the

target of our actions. Here, we instead use

hpx::find_all_localities, which returns an std::vector<>

containing the identifiers of all the machines in the system, including the one

that we are on.

As in Asynchronous execution with actions our futures are set using

hpx::async<>. The hello_world_foreman_action is declared

here:

// Define the boilerplate code necessary for the function 'hello_world_foreman'

// to be invoked as an HPX action.

HPX_PLAIN_ACTION(hello_world_foreman, hello_world_foreman_action)

Another way of thinking about this wrapping technique is as follows: functions (the work to be done) are wrapped in actions, and actions can be executed locally or remotely (e.g. on another machine participating in the computation).

Now it is time to look at the hello_world_foreman() function which was

wrapped in the action above:

void hello_world_foreman()

{

// Get the number of worker OS-threads in use by this locality.

std::size_t const os_threads = hpx::get_os_thread_count();

// Populate a set with the OS-thread numbers of all OS-threads on this

// locality. When the hello world message has been printed on a particular

// OS-thread, we will remove it from the set.

std::set<std::size_t> attendance;

for (std::size_t os_thread = 0; os_thread < os_threads; ++os_thread)

attendance.insert(os_thread);

// As long as there are still elements in the set, we must keep scheduling

// HPX-threads. Because HPX features work-stealing task schedulers, we have

// no way of enforcing which worker OS-thread will actually execute

// each HPX-thread.

while (!attendance.empty())

{

// Each iteration, we create a task for each element in the set of

// OS-threads that have not said "Hello world". Each of these tasks

// is encapsulated in a future.

std::vector<hpx::future<std::size_t>> futures;

futures.reserve(attendance.size());

for (std::size_t worker : attendance)

{

// Asynchronously start a new task. The task is encapsulated in a

// future that we can query to determine if the task has completed.

//

// We give the task a hint to run on a particular worker thread

// (core) and suggest binding the scheduled thread to the given

// core, but no guarantees are given by the scheduler that the task

// will actually run on that worker thread. It will however try as

// hard as possible to place the new task on the given worker

// thread.

hpx::execution::parallel_executor exec(

hpx::threads::thread_priority::bound);

hpx::threads::thread_schedule_hint hint(

hpx::threads::thread_schedule_hint_mode::thread,

static_cast<std::int16_t>(worker));

// Annotate the created threads with the given thread name

hpx::execution::experimental::annotating_executor annotating_exec(

hpx::execution::experimental::with_hint(exec, hint),

std::string("hello_world_worker#") + std::to_string(worker));

futures.push_back(

hpx::async(annotating_exec, hello_world_worker, worker));

}

// Wait for all of the futures to finish. The callback version of the

// hpx::wait_each function takes two arguments: a vector of futures,

// and a binary callback. The callback takes two arguments; the first

// is the index of the future in the vector, and the second is the

// return value of the future. hpx::wait_each doesn't return until

// all the futures in the vector have returned.

hpx::spinlock mtx;

hpx::wait_each(hpx::unwrapping([&](std::size_t t) {

if (std::size_t(-1) != t)

{

std::lock_guard<hpx::spinlock> lk(mtx);

attendance.erase(t);

}

}),

futures);

}

}

Now, before we discuss hello_world_foreman(), let’s talk about the

hpx::wait_each function.

The version of hpx::wait_each invokes a callback function

provided by the user, supplying the callback function with the result of the

future.

In hello_world_foreman(), an std::set<> called attendance keeps

track of which OS-threads have printed out the hello world message. When the

OS-thread prints out the statement, the future is marked as ready, and

hpx::wait_each in hello_world_foreman(). If it is not

executing on the correct OS-thread, it returns a value of -1, which causes

hello_world_foreman() to leave the OS-thread id in attendance.

std::size_t hello_world_worker(std::size_t desired)

{

// Returns the OS-thread number of the worker that is running this

// HPX-thread.

std::size_t current = hpx::get_worker_thread_num();

if (current == desired)

{

// The HPX-thread has been run on the desired OS-thread.

char const* msg = "hello world from OS-thread {1} on locality {2}\n";

hpx::util::format_to(hpx::cout, msg, desired, hpx::get_locality_id())

<< std::flush;

return desired;

}

// This HPX-thread has been run by the wrong OS-thread, make the foreman

// try again by rescheduling it.

return std::size_t(-1);

}

Because HPX features work stealing task schedulers, there is no way to guarantee that an action will be scheduled on a particular OS-thread. This is why we must use a guess-and-check approach.

Components and actions#

The accumulator examples demonstrate the use of components. Components are C++ classes that expose methods as a type of HPX action. These actions are called component actions. There are three examples: - accumulator - template accumulator - template function accumulator

Components are globally named, meaning that a component action can be called remotely (e.g., from another machine). There are two accumulator examples in HPX.

In the Asynchronous execution with actions and the Remote execution with actions, we introduced plain actions, which wrapped global functions. The target of a plain action is an identifier which refers to a particular machine involved in the computation. For plain actions, the target is the machine where the action will be executed.

Component actions, however, do not target machines. Instead, they target component instances. The instance may live on the machine that we’ve invoked the component action from, or it may live on another machine.

The components in these examples expose three different functions:

reset()- Resets the accumulator value to 0.add(arg)- Addsargto the accumulators value.query()- Queries the value of the accumulator.

These examples create an instance of the (template or template function) accumulator, and then allow the user to enter commands at a prompt, which subsequently invoke actions on the accumulator instance.

Accumulator#

Setup#

The source code for this example can be found here:

accumulator_client.cpp.

To compile this program, go to your HPX build directory (see Building HPX for information on configuring and building HPX) and enter:

$ make examples.accumulators.accumulator

To run the program type:

$ ./bin/accumulator_client

Once the program starts running, it will print the following prompt and then wait for input. An example session is given below:

commands: reset, add [amount], query, help, quit

> add 5

> add 10

> query

15

> add 2

> query

17

> reset

> add 1

> query

1

> quit

Walkthrough#

Now, let’s take a look at the source code of the accumulator example. This

example consists of two parts: an HPX component library (a library that

exposes an HPX component) and a client application which uses the library.

This walkthrough will cover the HPX component library. The code for the client

application can be found here: accumulator_client.cpp.

An HPX component is represented by two C++ classes:

A server class - The implementation of the component’s functionality.

A client class - A high-level interface that acts as a proxy for an instance of the component.

Typically, these two classes both have the same name, but the server class

usually lives in different sub-namespaces (server). For example, the full

names of the two classes in accumulator are:

examples::server::accumulator(server class)examples::accumulator(client class)

The following code is from server/accumulator.hpp.

All HPX component server classes must inherit publicly from the HPX

component base class: hpx::components::component_base

The accumulator component inherits from

hpx::components::locking_hook. This allows the runtime system to

ensure that all action invocations are serialized. That means that the system

ensures that no two actions are invoked at the same time on a given component

instance. This makes the component thread safe and no additional locking has to

be implemented by the user. Moreover, an accumulator component is a component

because it also inherits from hpx::components::component_base (the

template argument passed to locking_hook is used as its base class). The

following snippet shows the corresponding code:

class accumulator

: public hpx::components::locking_hook<

hpx::components::component_base<accumulator>>

Our accumulator class will need a data member to store its value in, so let’s declare a data member:

argument_type value_;

The constructor for this class simply initializes value_ to 0:

accumulator()

: value_(0)

{

}

Next, let’s look at the three methods of this component that we will be exposing as component actions:

Here are the action types. These types wrap the methods we’re exposing. The wrapping technique is very similar to the one used in the Asynchronous execution with actions and the Remote execution with actions:

HPX_DEFINE_COMPONENT_ACTION(accumulator, reset)

HPX_DEFINE_COMPONENT_ACTION(accumulator, add)

HPX_DEFINE_COMPONENT_ACTION(accumulator, query)

The last piece of code in the server class header is the declaration of the action type registration code:

HPX_REGISTER_ACTION_DECLARATION(

examples::server::accumulator::reset_action, accumulator_reset_action)

HPX_REGISTER_ACTION_DECLARATION(

examples::server::accumulator::add_action, accumulator_add_action)

HPX_REGISTER_ACTION_DECLARATION(

examples::server::accumulator::query_action, accumulator_query_action)

Note

The code above must be placed in the global namespace.

The rest of the registration code is in accumulator.cpp

///////////////////////////////////////////////////////////////////////////////

// Add factory registration functionality.

HPX_REGISTER_COMPONENT_MODULE()

///////////////////////////////////////////////////////////////////////////////

typedef hpx::components::component<examples::server::accumulator>

accumulator_type;

HPX_REGISTER_COMPONENT(accumulator_type, accumulator)

///////////////////////////////////////////////////////////////////////////////

// Serialization support for accumulator actions.

HPX_REGISTER_ACTION(

accumulator_type::wrapped_type::reset_action, accumulator_reset_action)

HPX_REGISTER_ACTION(

accumulator_type::wrapped_type::add_action, accumulator_add_action)

HPX_REGISTER_ACTION(

accumulator_type::wrapped_type::query_action, accumulator_query_action)

Note

The code above must be placed in the global namespace.

The following code is from accumulator.hpp

The client class is the primary interface to a component instance. Client classes are used to create components:

// Create a component on this locality.

examples::accumulator c = hpx::new_<examples::accumulator>(hpx::find_here());

and to invoke component actions:

c.add(hpx::launch::apply, 4);

Clients, like servers, need to inherit from a base class, this time,

hpx::components::client_base:

class accumulator

: public hpx::components::client_base<accumulator, server::accumulator>

For readability, we typedef the base class like so:

typedef hpx::components::client_base<accumulator, server::accumulator>

base_type;

Here are examples of how to expose actions through a client class:

There are a few different ways of invoking actions:

Non-blocking: For actions that don’t have return types, or when we do not care about the result of an action, we can invoke the action using fire-and-forget semantics. This means that once we have asked HPX to compute the action, we forget about it completely and continue with our computation. We use

hpx::postto invoke an action in a non-blocking fashion.

void reset(hpx::launch::apply_policy)

{

HPX_ASSERT(this->get_id());

typedef server::accumulator::reset_action action_type;

hpx::post(action_type(), this->get_id());

}

Asynchronous: Futures, as demonstrated in Asynchronous execution, Asynchronous execution with actions, and the Remote execution with actions, enable asynchronous action invocation. Here’s an example from the accumulator client class:

hpx::future<argument_type> query(hpx::launch::async_policy)

{

HPX_ASSERT(this->get_id());

typedef server::accumulator::query_action action_type;

return hpx::async(action_type(), this->get_id());

}

Synchronous: To invoke an action in a fully synchronous manner, we can simply call

hpx::syncwhich is semantically equivalent tohpx::async().get()(i.e., create a future and immediately wait on it to be ready). Here’s an example from the accumulator client class:

void add(argument_type arg)

{

HPX_ASSERT(this->get_id());

typedef server::accumulator::add_action action_type;

action_type()(this->get_id(), arg);

}

Note that this->get_id() references a data member of the

hpx::components::client_base base class which identifies the server

accumulator instance.

hpx::id_type is a type which represents a global identifier

in HPX. This type specifies the target of an action. This is the type that is

returned by hpx::find_here in which case it represents the

locality the code is running on.

Template accumulator#

Walkthrough#

The following code is from server/template_accumulator.hpp.

Similarly to the accumulator example, the component server class

inherits publicly from hpx::components::component_base and from

hpx::components::locking_hook ensuring thread-safe method invocations.

template <typename T>

class template_accumulator

: public hpx::components::locking_hook<

hpx::components::component_base<template_accumulator<T>>>

The body of the template accumulator class remains mainly the same as the accumulator with the difference that it uses templates in the data types.

typedef T argument_type;

template_accumulator()

: value_(0)

{

}

///////////////////////////////////////////////////////////////////////

// Exposed functionality of this component.

/// Reset the components value to 0.

void reset()

{

// set value_ to 0.

value_ = 0;

}

/// Add the given number to the accumulator.

void add(argument_type arg)

{

// add value_ to arg, and store the result in value_.

value_ += arg;

}

/// Return the current value to the caller.

argument_type query() const

{

// Get the value of value_.

return value_;

}

///////////////////////////////////////////////////////////////////////

// Each of the exposed functions needs to be encapsulated into an

// action type, generating all required boilerplate code for threads,

// serialization, etc.

HPX_DEFINE_COMPONENT_ACTION(template_accumulator, reset)

HPX_DEFINE_COMPONENT_ACTION(template_accumulator, add)

HPX_DEFINE_COMPONENT_ACTION(template_accumulator, query)

The last piece of code in the server class header is the declaration of the action type registration code. REGISTER_TEMPLATE_ACCUMULATOR_DECLARATION(type) declares actions for the specified type, while REGISTER_TEMPLATE_ACCUMULATOR(type) registers the actions and the component for the specified type, using macros to handle boilerplate code.

#define REGISTER_TEMPLATE_ACCUMULATOR_DECLARATION(type) \

HPX_REGISTER_ACTION_DECLARATION( \

examples::server::template_accumulator<type>::reset_action, \

HPX_PP_CAT(__template_accumulator_reset_action_, type)) \

\

HPX_REGISTER_ACTION_DECLARATION( \

examples::server::template_accumulator<type>::add_action, \

HPX_PP_CAT(__template_accumulator_add_action_, type)) \

\

HPX_REGISTER_ACTION_DECLARATION( \

examples::server::template_accumulator<type>::query_action, \

HPX_PP_CAT(__template_accumulator_query_action_, type)) \

/**/

#define REGISTER_TEMPLATE_ACCUMULATOR(type) \

HPX_REGISTER_ACTION( \

examples::server::template_accumulator<type>::reset_action, \

HPX_PP_CAT(__template_accumulator_reset_action_, type)) \

\

HPX_REGISTER_ACTION( \

examples::server::template_accumulator<type>::add_action, \

HPX_PP_CAT(__template_accumulator_add_action_, type)) \

\

HPX_REGISTER_ACTION( \

examples::server::template_accumulator<type>::query_action, \

HPX_PP_CAT(__template_accumulator_query_action_, type)) \

\

typedef ::hpx::components::component< \

examples::server::template_accumulator<type>> \

HPX_PP_CAT(__template_accumulator_, type); \

HPX_REGISTER_COMPONENT(HPX_PP_CAT(__template_accumulator_, type)) \

/**/

Note

The code above must be placed in the global namespace.

Finally, HPX_REGISTER_COMPONENT_MODULE() in file server/template_accumulator.cpp adds the factory registration functionality.

The client class of the template accumulator can be found in template_accumulator.hpp and is very similar to the client class of the accumulator with the only difference that it uses templates and hence can work with different types.

Template function accumulator#

Walkthrough#

The following code is from server/template_function_accumulator.hpp.

The component server class inherits publicly from hpx::components::component_base.

class template_function_accumulator

: public hpx::components::component_base<template_function_accumulator>

typedef hpx::spinlock mutex_type defines a mutex_type as hpx::spinlock for thread safety, while the code that follows exposes the functionality of this component.

///////////////////////////////////////////////////////////////////////

// Exposed functionality of this component.

/// Reset the value to 0.

void reset()

{

// Atomically set value_ to 0.

std::lock_guard<mutex_type> l(mtx_);

value_ = 0;

}

/// Add the given number to the accumulator.

template <typename T>

void add(T arg)

{

// Atomically add value_ to arg, and store the result in value_.

std::lock_guard<mutex_type> l(mtx_);

value_ += static_cast<double>(arg);

}

/// Return the current value to the caller.

double query() const

{

// Get the value of value_.

std::lock_guard<mutex_type> l(mtx_);

return value_;

}

reset(): Resets the accumulator value to 0 in a thread-safe manner using std::lock_guard.

add(): Adds a value to the accumulator, allowing any type T that can be cast to double.

query(): Returns the current value of the accumulator in a thread-safe manner.

To define the actions for reset() and query() we can use the macro HPX_DEFINE_COMPONENT_ACTION. However, actions with template arguments require special type definitions. Therefore, we use make_action() to define add().

///////////////////////////////////////////////////////////////////////

// Each of the exposed functions needs to be encapsulated into an

// action type, generating all required boilerplate code for threads,

// serialization, etc.

HPX_DEFINE_COMPONENT_ACTION(template_function_accumulator, reset)

HPX_DEFINE_COMPONENT_ACTION(template_function_accumulator, query)

// Actions with template arguments (see add<>() above) require special

// type definitions. The simplest way to define such an action type is

// by deriving from the HPX facility make_action.

template <typename T>

struct add_action

: hpx::actions::make_action<void (template_function_accumulator::*)(

T),

&template_function_accumulator::template add<T>,

add_action<T>>::type

{

};

The last piece of code in the server class header is the action registration:

HPX_REGISTER_ACTION_DECLARATION(

examples::server::template_function_accumulator::reset_action,

managed_accumulator_reset_action)

HPX_REGISTER_ACTION_DECLARATION(

examples::server::template_function_accumulator::query_action,

managed_accumulator_query_action)

Note

The code above must be placed in the global namespace.

The rest of the registration code is in accumulator.cpp

///////////////////////////////////////////////////////////////////////////////

// Add factory registration functionality.

HPX_REGISTER_COMPONENT_MODULE()

///////////////////////////////////////////////////////////////////////////////

typedef hpx::components::component<

examples::server::template_function_accumulator>

accumulator_type;

HPX_REGISTER_COMPONENT(accumulator_type, template_function_accumulator)

///////////////////////////////////////////////////////////////////////////////

// Serialization support for managed_accumulator actions.

HPX_REGISTER_ACTION(accumulator_type::wrapped_type::reset_action,

managed_accumulator_reset_action)

HPX_REGISTER_ACTION(accumulator_type::wrapped_type::query_action,

managed_accumulator_query_action)

Note

The code above must be placed in the global namespace.

The client class of the template accumulator can be found in template_function_accumulator.hpp and is very similar to the client class of the accumulator with the only difference that it uses templates and hence can work with different types.

Dataflow#

HPX provides its users with several different tools to simply express parallel concepts. One of these tools is a local control object (LCO) called dataflow. An LCO is a type of component that can spawn a new thread when triggered. They are also distinguished from other components by a standard interface that allow users to understand and use them easily. A Dataflow, being an LCO, is triggered when the values it depends on become available. For instance, if you have a calculation X that depends on the results of three other calculations, you could set up a dataflow that would begin the calculation X as soon as the other three calculations have returned their values. Dataflows are set up to depend on other dataflows. It is this property that makes dataflow a powerful parallelization tool. If you understand the dependencies of your calculation, you can devise a simple algorithm that sets up a dependency tree to be executed. In this example, we calculate compound interest. To calculate compound interest, one must calculate the interest made in each compound period, and then add that interest back to the principal before calculating the interest made in the next period. A practical person would, of course, use the formula for compound interest:

where \(F\) is the future value, \(P\) is the principal value, \(i\) is the interest rate, and \(n\) is the number of compound periods.

However, for the sake of this example, we have chosen to manually calculate the future value by iterating:

and

Setup#

The source code for this example can be found here:

interest_calculator.cpp.

To compile this program, go to your HPX build directory (see Building HPX for information on configuring and building HPX) and enter:

$ make examples.quickstart.interest_calculator

To run the program type:

$ ./bin/interest_calculator --principal 100 --rate 5 --cp 6 --time 36

Final amount: 134.01

Amount made: 34.0096

Walkthrough#

Let us begin with main. Here we can see that we again are using

Boost.Program_options to set our command line variables (see

Asynchronous execution with actions for more details). These options set the principal,

rate, compound period, and time. It is important to note that the units of time

for cp and time must be the same.

int main(int argc, char** argv)

{

options_description cmdline("Usage: " HPX_APPLICATION_STRING " [options]");

cmdline.add_options()("principal", value<double>()->default_value(1000),

"The principal [$]")("rate", value<double>()->default_value(7),

"The interest rate [%]")("cp", value<int>()->default_value(12),

"The compound period [months]")("time",

value<int>()->default_value(12 * 30),

"The time money is invested [months]");

hpx::init_params init_args;

init_args.desc_cmdline = cmdline;

return hpx::init(argc, argv, init_args);

}

Next we look at hpx_main.

int hpx_main(variables_map& vm)

{

{

using hpx::dataflow;

using hpx::make_ready_future;

using hpx::shared_future;

using hpx::unwrapping;

hpx::id_type here = hpx::find_here();

double init_principal =

vm["principal"].as<double>(); //Initial principal

double init_rate = vm["rate"].as<double>(); //Interest rate

int cp = vm["cp"].as<int>(); //Length of a compound period

int t = vm["time"].as<int>(); //Length of time money is invested

init_rate /= 100; //Rate is a % and must be converted

t /= cp; //Determine how many times to iterate interest calculation:

//How many full compound periods can fit in the time invested

// In non-dataflow terms the implemented algorithm would look like:

//

// int t = 5; // number of time periods to use

// double principal = init_principal;

// double rate = init_rate;

//

// for (int i = 0; i < t; ++i)

// {

// double interest = calc(principal, rate);

// principal = add(principal, interest);

// }

//

// Please note the similarity with the code below!

shared_future<double> principal = make_ready_future(init_principal);

shared_future<double> rate = make_ready_future(init_rate);

for (int i = 0; i < t; ++i)

{

shared_future<double> interest =

dataflow(unwrapping(calc), principal, rate);

principal = dataflow(unwrapping(add), principal, interest);

}

// wait for the dataflow execution graph to be finished calculating our

// overall interest

double result = principal.get();

std::cout << "Final amount: " << result << std::endl;

std::cout << "Amount made: " << result - init_principal << std::endl;

}

return hpx::finalize();

}

Here we find our command line variables read in, the rate is converted from a

percent to a decimal, the number of calculation iterations is determined, and

then our shared_futures are set up. Notice that we first place our principal and

rate into shares futures by passing the variables init_principal and

init_rate using hpx::make_ready_future.

In this way hpx::shared_future<double> principal

and rate will be initialized to init_principal and init_rate when

hpx::make_ready_future<double> returns a future containing

those initial values. These shared futures then enter the for loop and are

passed to interest. Next principal and interest are passed to the

reassignment of principal using a hpx::dataflow. A dataflow

will first wait for its arguments to be ready before launching any callbacks, so

add in this case will not begin until both principal and interest

are ready. This loop continues for each compound period that must be calculated.

To see how interest and principal are calculated in the loop, let us look

at calc_action and add_action:

// Calculate interest for one period

double calc(double principal, double rate)

{

return principal * rate;

}

///////////////////////////////////////////////////////////////////////////////

// Add the amount made to the principal

double add(double principal, double interest)

{

return principal + interest;

}

After the shared future dependencies have been defined in hpx_main, we see the following statement:

double result = principal.get();

This statement calls hpx::future::get on the shared future

principal which had its value calculated by our for loop. The program will wait

here until the entire dataflow tree has been calculated and the value assigned

to result. The program then prints out the final value of the investment and the

amount of interest made by subtracting the final value of the investment from

the initial value of the investment.

Local to remote#

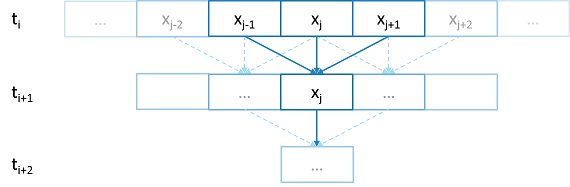

When developers write code they typically begin with a simple serial code and build upon it until all of the required functionality is present. The following set of examples were developed to demonstrate this iterative process of evolving a simple serial program to an efficient, fully-distributed HPX application. For this demonstration, we implemented a 1D heat distribution problem. This calculation simulates the diffusion of heat across a ring from an initialized state to some user-defined point in the future. It does this by breaking each portion of the ring into discrete segments and using the current segment’s temperature and the temperature of the surrounding segments to calculate the temperature of the current segment in the next timestep as shown by Fig. 2 below.

Fig. 2 Heat diffusion example program flow.#

We parallelize this code over the following eight examples:

The first example is straight serial code. In this code we instantiate a vector

U that contains two vectors of doubles as seen in the structure

stepper.

struct stepper

{

// Our partition type

typedef double partition;

// Our data for one time step

typedef std::vector<partition> space;

// Our operator

static double heat(double left, double middle, double right)

{

return middle + (k * dt / (dx * dx)) * (left - 2 * middle + right);

}

// do all the work on 'nx' data points for 'nt' time steps

space do_work(std::size_t nx, std::size_t nt)

{

// U[t][i] is the state of position i at time t.

std::vector<space> U(2);

for (space& s : U)

s.resize(nx);

// Initial conditions: f(0, i) = i

for (std::size_t i = 0; i != nx; ++i)

U[0][i] = double(i);

// Actual time step loop

for (std::size_t t = 0; t != nt; ++t)

{

space const& current = U[t % 2];

space& next = U[(t + 1) % 2];

next[0] = heat(current[nx - 1], current[0], current[1]);

for (std::size_t i = 1; i != nx - 1; ++i)

next[i] = heat(current[i - 1], current[i], current[i + 1]);

next[nx - 1] = heat(current[nx - 2], current[nx - 1], current[0]);

}

// Return the solution at time-step 'nt'.

return U[nt % 2];

}

};

Each element in the vector of doubles represents a single grid point. To

calculate the change in heat distribution, the temperature of each grid point,

along with its neighbors, is passed to the function heat. In order to

improve readability, references named current and next are created

which, depending on the time step, point to the first and second vector of

doubles. The first vector of doubles is initialized with a simple heat ramp.

After calling the heat function with the data in the current vector, the

results are placed into the next vector.

In example 2 we employ a technique called futurization. Futurization is a method

by which we can easily transform a code that is serially executed into a code

that creates asynchronous threads. In the simplest case this involves replacing

a variable with a future to a variable, a function with a future to a function,

and adding a .get() at the point where a value is actually needed. The code

below shows how this technique was applied to the struct stepper.

struct stepper

{

// Our partition type

typedef hpx::shared_future<double> partition;

// Our data for one time step

typedef std::vector<partition> space;

// Our operator

static double heat(double left, double middle, double right)

{

return middle + (k * dt / (dx * dx)) * (left - 2 * middle + right);

}

// do all the work on 'nx' data points for 'nt' time steps

hpx::future<space> do_work(std::size_t nx, std::size_t nt)

{

using hpx::dataflow;

using hpx::unwrapping;

// U[t][i] is the state of position i at time t.

std::vector<space> U(2);

for (space& s : U)

s.resize(nx);

// Initial conditions: f(0, i) = i

for (std::size_t i = 0; i != nx; ++i)

U[0][i] = hpx::make_ready_future(double(i));

auto Op = unwrapping(&stepper::heat);

// Actual time step loop

for (std::size_t t = 0; t != nt; ++t)

{

space const& current = U[t % 2];

space& next = U[(t + 1) % 2];

// WHEN U[t][i-1], U[t][i], and U[t][i+1] have been computed, THEN we

// can compute U[t+1][i]

for (std::size_t i = 0; i != nx; ++i)

{

next[i] =

dataflow(hpx::launch::async, Op, current[idx(i, -1, nx)],

current[i], current[idx(i, +1, nx)]);

}

}

// Now the asynchronous computation is running; the above for-loop does not

// wait on anything. There is no implicit waiting at the end of each timestep;

// the computation of each U[t][i] will begin as soon as its dependencies

// are ready and hardware is available.

// Return the solution at time-step 'nt'.

return hpx::when_all(U[nt % 2]);

}

};

In example 2, we redefine our partition type as a shared_future and, in

main, create the object result, which is a future to a vector of

partitions. We use result to represent the last vector in a string of

vectors created for each timestep. In order to move to the next timestep, the

values of a partition and its neighbors must be passed to heat once the

futures that contain them are ready. In HPX, we have an LCO (Local Control

Object) named Dataflow that assists the programmer in expressing this

dependency. Dataflow allows us to pass the results of a set of futures to a

specified function when the futures are ready. Dataflow takes three types of

arguments, one which instructs the dataflow on how to perform the function call

(async or sync), the function to call (in this case Op), and futures to the

arguments that will be passed to the function. When called, dataflow immediately

returns a future to the result of the specified function. This allows users to

string dataflows together and construct an execution tree.

After the values of the futures in dataflow are ready, the values must be pulled

out of the future container to be passed to the function heat. In order to

do this, we use the HPX facility unwrapping, which underneath calls

.get() on each of the futures so that the function heat will be passed

doubles and not futures to doubles.

By setting up the algorithm this way, the program will be able to execute as quickly as the dependencies of each future are met. Unfortunately, this example runs terribly slow. This increase in execution time is caused by the overheads needed to create a future for each data point. Because the work done within each call to heat is very small, the overhead of creating and scheduling each of the three futures is greater than that of the actual useful work! In order to amortize the overheads of our synchronization techniques, we need to be able to control the amount of work that will be done with each future. We call this amount of work per overhead grain size.

In example 3, we return to our serial code to figure out how to control the

grain size of our program. The strategy that we employ is to create “partitions”

of data points. The user can define how many partitions are created and how many

data points are contained in each partition. This is accomplished by creating

the struct partition, which contains a member object data_, a vector of

doubles that holds the data points assigned to a particular instance of

partition.

In example 4, we take advantage of the partition setup by redefining space

to be a vector of shared_futures with each future representing a partition. In

this manner, each future represents several data points. Because the user can

define how many data points are in each partition, and, therefore, how

many data points are represented by one future, a user can control the